Whisper Provider Setup¶

Note: Whisper is capable of transcribing many languages, but can only translate a language into English. It does not support translating to other languages.

Whisper (based on OpenAI Whisper) uses a neural network powered by your CPU or NVIDIA graphics card to generate subtitles for your media.

Whisper supports transcribing in many languages as well as translating from a language to English. The provider works best when it knows the audio language ahead of time. Make sure the 'Deep analyze media file to get audio tracks language' option is enabled to ensure the best results.

Minimum score must be lowered if you want whisper generated subtitles to be automatically "downloaded" because they have a fixed score which is 220/360 (~61%) for episodes and 60/180 (~33%) for movies.

These scores are fixed because Whisper always matches only series + season + episode for episodes and title for movies (see morpheus65535:bazarr/custom_libs/subliminal_patch/providers/whisperai.py the get_matches method), against the point values defined in morpheus65535:bazarr/custom_libs/subliminal_patch/providers/whisperai.py. The score may be +1 higher (221/360 or 61/180) if your language profile's hearing impaired preference matches the subtitle.

whisper-asr-webservice [SubGen]¶

Bazarr's Whisper provider communicates with SubGen. Refer to its documentation for more assistance in setting it up.

Choosing a Model¶

Larger models are more accurate but take longer to run. Choose the largest model you are comfortable with and your CPU/GPU is capable of running.

Available ASR_MODELs are tiny, base, small, medium, large (only OpenAI Whisper), large-v1, large-v2 and large-v3 (only OpenAI Whisper for now).

Choosing a backend¶

whisper-asr-webservice supports multiple backends. Currently, there are two available options:

- openai_whisper (original implementation)

- faster_whisper

Docker Installation¶

The complete Docker Compose file can be found here.

Prerequsites for the below examples¶

cache dir for the models¶

mkdir -p {subgenai_data_folder}/models

.env file¶

subgenai_data_folder=/path/to/your/subgen

subgen.env file¶

WHISPER_MODEL=medium

WHISPER_THREADS=4

WEBHOOKPORT=9000

TRANSCRIBE_DEVICE=cuda

DEBUG=True

CLEAR_VRAM_ON_COMPLETE=True

APPEND=False

TRANSCRIBE_OR_TRANSLATE=transcribe

NAMESUBLANG=ai

GPU (recommended)¶

services:

subgenai:

depends_on:

bazarr:

condition: service_healthy

image: 'mccloud/subgen:latest'

container_name: subgenai

volumes:

- '${subgenai_data_folder}/models:/subgen/models'

- '${subgenai_data_folder}/subgen.env:/subgen/subgen.env:ro'

environment:

LOG_LEVEL: debug

NAME_SERVERS: 9.9.9.9

PROCADDEDMEDIA: true

PROCMEDIAONPLAY: false

PLEXTOKEN: plextoken

PLEXSERVER: 'http://127.0.0.1:32400'

CONCURRENT_TRANSCRIPTIONS: 2

USE_PATH_MAPPING: false

MODEL_PATH: ./models

deploy:

resources:

reservations:

devices:

- driver: nvidia

count: 1

capabilities:

- gpu

CPU¶

services:

subgenai:

depends_on:

bazarr:

condition: service_healthy

image: 'mccloud/subgen:cpu'

container_name: subgenai

volumes:

- '${subgenai_data_folder}/models:/subgen/models'

- '${subgenai_data_folder}/subgen.env:/subgen/subgen.env:ro'

environment:

LOG_LEVEL: debug

NAME_SERVERS: 9.9.9.9

PROCADDEDMEDIA: true

PROCMEDIAONPLAY: false

PLEXTOKEN: plextoken

PLEXSERVER: 'http://127.0.0.1:32400'

CONCURRENT_TRANSCRIPTIONS: 2

USE_PATH_MAPPING: false

MODEL_PATH: ./models

Docker on Windows¶

You can run the ASR container on your Windows PC. This is especially useful if its your only way to get access to a powerful GPU. Follow these instructions for more information.

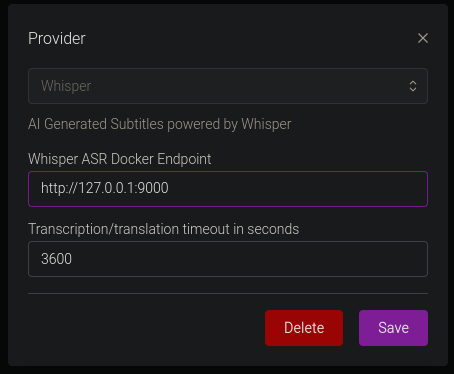

Bazarr Configuration¶

Change the endpoint to the server you are hosting the Whisper container on (127.0.0.1 if on the same machine), and adjust the timeout if you find it keeps timing out on long movies or TV shows. The endpoint must start with http://

Troubleshooting¶

Language detection¶

When Bazarr doesn't know the language of the media you're trying to get subtitles for, Whisper must guess. It only uses the first 30 seconds of audio in order to detect the language. To ensure best results, use media which has the audio language specified in the file, and make sure the deep analyze option is turned on.

Bazarr's implementation of Whisper is still in early stages. If you have any issues, follow these instructions:

- Enable debug logging

- Join the Bazarr discord and post your logs, and a description of the problem on the #whisper channel

- Include an @Alex mention to get my attention